2015 was a big year for Volt Active Data. Our product improved as we added exciting features and invested in making the product more robust. Our team has grown, as have the number of users, customers and revenue.

However, three things stand out that really make 2015 for me.

- The kinds of customers we are attracting have changed. We still attract early adopters and innovative startups looking for an edge, and we love working with those customers. But 2015 saw real growth in household names using Volt Active Data. Nokia, FlipKart, MachineZone, Sprint, Deutsche-Telekom, Qantas, CGI, Bayer, Huawei, etc… The most frustrating thing for me is the list of big-name customers I can’t share. The large banks are particularly secretive.

- Customers are happy with Volt Active Data. Don’t get me wrong, they all want a million things yesterday, but Volt Active Data is doing what we told them it would do. Customers are renewing and, in many cases, expanding their use of Volt Active Data, both through bigger clusters and additional use-cases.

- A large number of these customers are literally betting their businesses on Volt Active Data. Volt Active Data isn’t being used for some side stream processing project, or for a few counters here and there, but as the core of their operations. National stock exchanges, cellular networks, credit card processing: these kinds of applications will keep an engineer up at night. It’s incredibly validating to have these customers choose Volt Active Data, and it confirms what we know: other databases just can’t do what Volt Active Data does.

Application Patterns of Volt Active Data Applications

Also validating is the number of use cases that are repeating, demonstrating the application features that make Volt Active Data the best system for those problems. A few examples below in bold:

Policy enforcement, billing, accounting, and other problems where precision matters

When Volt Active Data says ACID transactions, it means the strictest interpretation possible: cluster-wide transactions with serializable isolation. Volt Active Data is the only well-known distributed operational database that offers this level of consistency – read this post from Peter Bailis for more.

Why is this important? It turns out that relaxed consistency and lack of strong transactional semantics make a lot of things harder. Doing something precisely once becomes hard, but so is doing something precisely n times. This kind of thing is just a small part of policy enforcement, which often involves complicated rules that must be precisely applied, concurrently.

Billing problems often introduce arithmetic. In a world where simple counting at scale is hard, doing actual mathematics is harder still. Yet Volt Active Data’s serializable execution model essentially lets you do whatever math you want. When the transaction commits, that’s it; it’s recorded.

For more examples and discussion about these kinds of applications and about how weaker ACID makes them harder to build, read through my last two blog posts.

Low latency decisions with very consistent long tail latency to meet SLAs

Typical Volt Active Data latency from client invocation to receipt of response is around 1-3 milliseconds, assuming both client and servers are co-located.

Volt Active Data isn’t the only system claiming latency like this, but if you look beneath the surface, what’s happening – and the work that’s accomplished – is really different. Where other systems are performing a key-lookup, or even a simple query, Volt Active Data is executing code that mixes logic, queries, writes and streams, all with the same low latency.

Consider mobile call authorization. Someone with a mobile phone dials a number, hits send, and then waits for the ringing to begin. In the time between hitting send and ringing, Volt Active Data receives a stored procedure invocation from the mobile carrier’s software. It runs a series of statements to check if the call is allowed to go through:

- Is it an acceptable destination number according to the caller’s plan?

- Does the caller have enough balance to cover the call if pre-paid?

- Does it pass the sniff-test for fraud?

Next, the stored procedure runs more SQL to record whether the call was approved, and, if so, to record any changes to the caller’s balance.

Finally, the response is sent from Volt Active Data to the carrier’s application, which connects the call. The user hears ringing and all is well. This all needs to happen in the blink of an eye, 50ms to be exact. And if Volt Active Data can’t respond in 50ms, then the call goes through anyway, leaving the carrier to eat the cost.

This is different from so many of the event-driven architectures available. If you need a response in milliseconds, you need a system that can respond actively with almost boring consistency.

You’re not going to stick call requests from the carrier’s app in a Kafka log to manage ingest, then generate responses into another Kafka log that the carrier application reads. It may be possible to get the latency low enough in the best case, but today it’s impossible to make the long tail fall in line.

We’ve seen similar applications in payment processing: is this card swipe fraudulent? That decision needs to be made between the swipe and the approval of the card. Take too long and the line backs up. But do a poor or less-than-thorough job and the card issuer loses money.

We have customers using Volt Active Data for real-time micro-personalization. Volt Active Data is especially adept at combining insight from big-data machine learning and real-time event streams. For example, based on what the database knows about a user and based on the recent history of user actions, Volt Active Data can help applications and websites respond to user actions in real-time. On the web, Volt Active Data can make and record decisions between clicking a link and drawing a page. In a mobile game, it can determine what’s behind a door, or how much those enchanted ascots cost.

All in milliseconds, millions of times per second.

Virtualizable, containerizable and cloud friendly

Volt Active Data was designed from the beginning to scale out on commodity hardware and commodity networking. All of our releases have been tested in public clouds, with private virtualization, as well as on bare metal. This model extends to Docker and container tech as well.

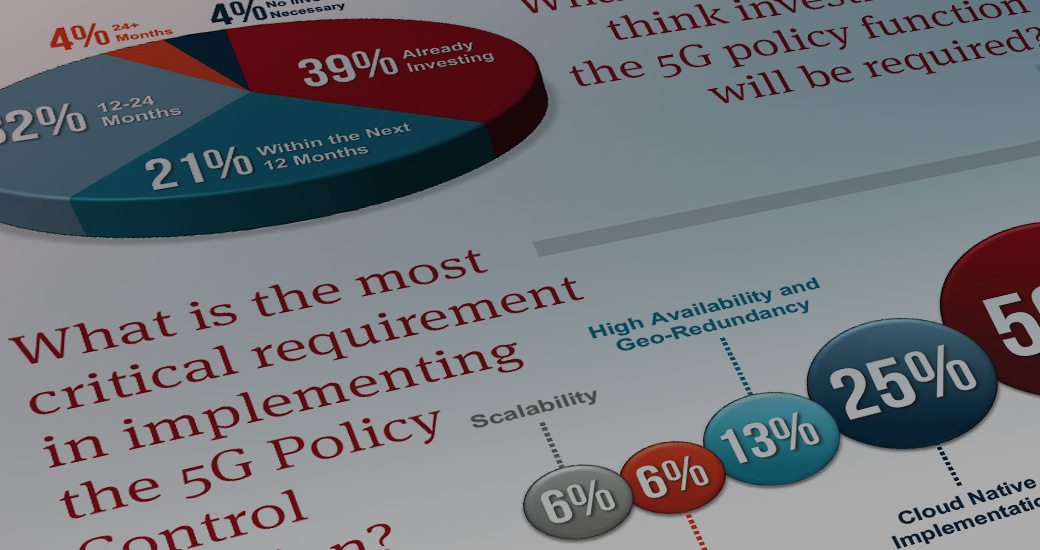

We’ve had tremendous success in telecommunications in 2015, where scalability/virtualization/containers/cloud are the key drivers. Telecommunications operators are rushing to virtualize and automate as much infrastructure as possible, whether that’s part of a specific NFV (Network Function Virtualization) effort, or simply an effort to modernize operations, cut costs and increase flexibility.

When you are running a datacenter with racks of virtualized commodity hardware running fully automated stacks, but you have to run big iron bare metal in the corner specifically to handle your database, you’re going to be looking for alternatives. I’d argue that a few years ago you didn’t have any. Now you have one.

Other systems can be virtualized, but they don’t offer the combination of low latency, stored logic, strong ACID, and very consistent latency profiles needed to replace the more traditional big iron systems.

A side note: if you’re in telco and not looking at Volt Active Data, know that your competition is. Transactions, throughput, predictable and low latency, all mixed with synchronous durability, horizontal scaling, and geo distribution make Volt Active Data a perfect match for telco operations.

Strong consistency and geo-distributed

Volt Active Data has offered active-passive multi-datacenter support for HA and for redundancy for several years now. In 2015, we introduced Active-Active multi-datacenter support. The biggest driver was latency for geo-distributed clients. If you’re trying to make a phone call in California, you shouldn’t have to wait over 100ms to ask a Volt Active Data cluster in Virginia for authorization. With multiple Active-Active clusters, end users see lower latency and operators still get high availability and data redundancy. By default, Volt Active Data uses a “Last-Write-Wins” strategy to resolve conflicts between concurrent writes, and a log of all decisions is made for later review.

Only committed transactions are replicated to other clusters. This allows developers to build applications that are very unlikely to conflict, and in fact, work very much like single-datacenter Volt Active Data apps. It’s a bit more work for the application developer to add failover logic into their client applications, but building this kind of app on any platform is hard.

Immediately durable data, without big tradeoffs

Volt Active Data offers synchronous intra-cluster replication. In fact, there’s no asynchronous option. As for disk persistence, with a decent SSD, or with a decent flash/battery-backed disk-controller, synchronous disk persistence might add around 1ms of latency to operations, but typically won’t affect throughput.

This belt and suspenders approach is not just safer than what many systems offer, but also offers lower latency than many systems with weaker guarantees. This is accomplished using Volt Active Data’s unique take on determinism.

A system that doesn’t require installation of several other systems, doesn’t require a tremendous number of nodes, and is easy to manage

A common practice with less-capable databases is to pair them with another system that offers per-event processing or richer query support than the core database offers. Spark Streaming and Spark are a hot choice for these roles. To be clear, we like Spark. A number of Volt Active Data users use Spark (especially MLLib) for deep processing, with Volt Active Data for fast processing and immediate state.

But other databases are talking about joining with Spark on the operational side of things. Suddenly you’re talking about running two clusters (more if you count ZooKeeper). You need all clusters running smoothly for your operational stack to continue. You need the glue code between systems to run smoothly.

On the other hand, if you need per-event processing with exactly-once semantics with Volt Active Data, you don’t need anything extra.

If you need rich operational analytics with global aggregates and SQL features like subqueries, joins and rich predicates, you don’t need anything extra.

Within your Volt Active Data cluster, there are no leaf nodes and aggregator nodes. There are no primary nodes and replica nodes. There are no transaction nodes and storage nodes. All nodes are the same. When a node fails, replace it with a blank node and Volt Active Data will self-heal without compromising ACID or CAP consistency.

And there’s icing: Volt Active Data is extremely efficient per node. The net result is smaller clusters that are dramatically easier to manage.

The “AND” Database

Individually, some of the application patterns described above are solvable with other systems, but the advantage of Volt Active Data is that it’s an “AND” database. Our customers are using our headline features all at once in the same app.

Too many systems tell you:

- You can have strong consistency, but it’s going to cost latency and throughput.

- You can have effective exactly-once processing, but with major limitations on how you build your app and the state required to support it.

- You can have powerful queries, but only at individual nodes, not across the whole cluster.

Volt Active Data has customers in production whose apps combine world-class performance with the strongest consistency, write-heavy workloads, and multi-statement transactions. If that’s not enough, these apps use synchronous cluster replication and synchronous disk persistence, providing the highest levels of availability and safety. And if that isn’t enough, these applications often need to meet strict latency SLAs, such as 99.999% of transactions must respond within 50ms.

We’ve bet hard on an unconventional architecture, surely. On the one hand, we don’t focus on ad-hoc, analytical workloads; we integrate nicely with all kinds of systems that do those well, from relational column-stores to HDFS/Hadoop-ecosystem options like Impala and Spark.

But the payoff means we get to challenge conventional database wisdom. Over the years I’ve encountered so many people who just know what we do is impossible. “Synchronous”, whether network or disk means higher latency, right? Stronger consistency means slower, right? All in-memory systems are the same?

Give Volt Active Data a try and challenge those assumptions.

Discuss this post on Hacker News.