Application State Management and Data-Driven Decision Making

As the complexity of applications and systems increases, the size of the teams that work on these also increase. In these scenarios, having the system as a monolithic one inhibits the development team from being able to move forward at speed. This gave rise to the need for an approach that would allow independent functional teams to be able to deliver their functionality in its entirety with minimal (if any) dependency on other teams. This goal has been attempted to be addressed from the beginning of time: think of Object Oriented Programming, Service Oriented Architecture, Enterprise Service Bus and now Microservices.

Real-World Example Problem

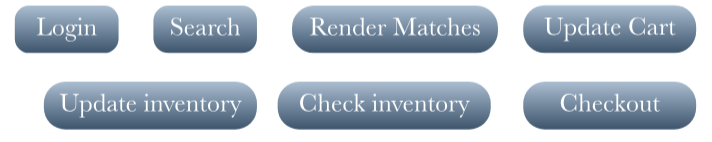

The premise of micro-service is that everything related to a functionality should reside in a self-sufficient manner. Think of a monolithic application eCommerce application (albeit, super simplified scope) that has the following modules:

Each of these functions could have different utilization levels and to have an entire application to scale to the maximum level is going to be consuming resources incongruent to utilization. A person logs in once per session, but could end up searching many times, updating their cart less frequently, ultimately checking out only once. Perhaps, then the user logs out after checkout. In this case, the search functionality and inventory check functionality would be used a lot more than the others. By breaking down the application into self sufficient services, i.e. microservices, the appropriate service can then be scaled according to the load on the function. But that fully self sufficient nature means that each of these services have their own end-to-end full stack of technology, including database. If the data is common between multiple services, the database layer gets extricated out of microservices in favor of the creation of a common shared data layer. This allows a consistent implementation of the Saga pattern where the participating services can then signal each other and operate off the same database.

Problem solved, right?

Close, but not quite. There are further requirements for high performance in the following:

- Telecom:

- vEPC or 5G Core

- IMS

- OSS/BSS

- Call fraud prevention

- Financial Services:

- Credit card fraud prevention

- Portfolio risk management

- Real-time slippage calculation

- eCommerce

- Real-time inventory management

- Real-time order management

- Search consistent with inventory levels

In these use cases, data processing usually has less than a 5 milliseconds latency budget. This is where the popular notion of separating data and business logic starts needing a little refinement into two kinds of business logic:

- Application state control business logic

application logic stays with the application, i.e. the micro-service and - Data-driven decision making business logic

data-driven business logic stays close to the data, i.e. in the database.

This kind of a separation allows both layers to scale independently according to the performance needs of each while not creating either an inconsistent/diverging data set or a multi-layer, stitched-together approach where the infrastructure footprint bloats artificially to ensure resilience at each layer.

Where does Volt Active Data come in?

Volt Active Data is a distributed in-memory database that is designed to power real-time intelligent decisions on fast moving streaming data. With the stored procedures framework, and the in-memory data storage engine, Volt Active Data drives most complex business logic in the lowest latency in a scalable manner, even in a virtualized environment like VMs and containers. All this without compromising on the ACID guarantees that are expected from an enterprise-grade database.

If you are in your journey of moving your applications to a micro-service architecture, give us a shout to see how we can help meet your data processing needs with cross-functional consistency.